A bigger brain for the Unitree G1- Dev w/ G1 Humanoid P.4

Summary

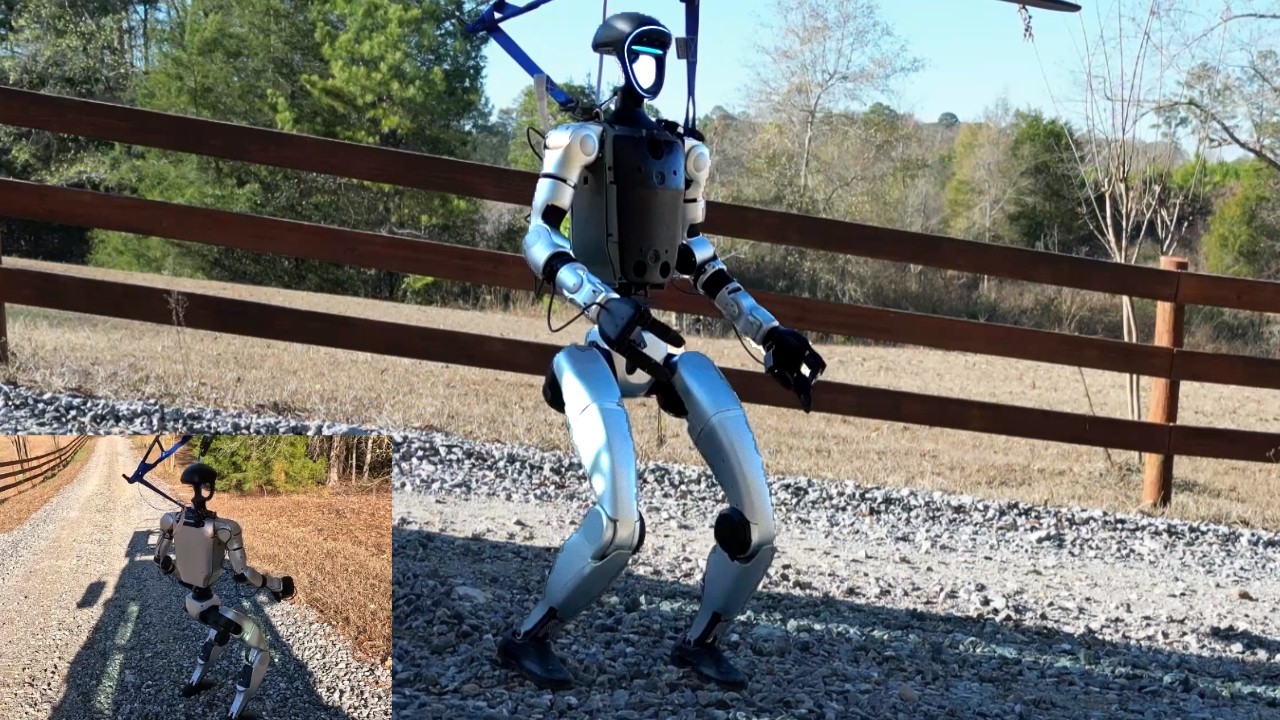

This video details the development process of integrating a Vision Language Model (VLM) onto a Unitree G1 humanoid robot. It provides practical insights into applying advanced AI concepts, such as VLMs, in robotics, making it highly valuable educational content for students and educators learning about AI/ML development and real-world AI applications.

Description

Adding a vision language model and procrastinating a little longer about going into the sim Unitree G1 series playlist: https://www.youtube.com/playlist?list=PLQVvvaa0QuDdNJ7QbjYeDaQd6g5vfR8km Neural Networks from Scratch book: https://nnfs.io Channel membership: https://www.youtube.com/channel/UCfzlCWGWYyIQ0aLC5w48gBQ/join Discord: https://discord.gg/sentdex Reddit: https://www.reddit.com/r/sentdex/ Support the content: https://pythonprogramming.net/support-donate/ Twitter: https://twitter.com/sentdex Instagram: https://instagram.com/sentdex Facebook: https://www.facebook.com/pythonprogramming.net/ Twitch: https://www.twitch.tv/sentdex

More Videos

43:29

43:29Training a Unitree G1 to Walk w/ Reinforcement Learning

44:11

44:11Unitree G1 Security Disaster

25:21

25:21Testing VLMs and LLMs for robotics w/ the Jetson Thor devkit

28:40

28:40Reinforcement learning with Unitree G1 humanoid - Dev w/ G1 P.5

29:32

29:32Unitree G1 - Moving the arms/hands - Dev w/ G1 Humanoid P.3

40:51

40:51