Titans: Learning to Memorize at Test Time (Paper Analysis)

Summary

This video provides an in-depth analysis of a research paper concerning how AI models, utilizing recurrent networks and attention mechanisms, learn and 'memorize' information during inference (test time). It delves into advanced machine learning concepts, discussing the foundational components of models that process sequential data. For students learning AI/ML, particularly those interested in the underlying mechanics of large language models or other sequence-to-sequence architectures, this offers valuable academic insight into cutting-edge research and core model design principles.

Description

Paper: https://arxiv.org/abs/2501.00663 Abstract: Over more than a decade there has been an extensive research effort on how to effectively utilize recurrent models and attention. While recurrent models aim to compress the data into a fixed-size memory (called hidden state), attention allows attending to the entire context window, capturing the direct dependencies of all tokens. This more accurate modeling of dependencies, however, comes with a quadratic cost, limiting the model to a fixed-length context. We present a new neural long-term memory module that learns to memorize historical context and helps attention to attend to the current context while utilizing long past information. We show that this neural memory has the advantage of fast parallelizable training while maintaining a fast inference. From a memory perspective, we argue that attention due to its limited context but accurate dependency modeling performs as a short-term memory, while neural memory due to its ability to memorize the data, acts as a long-term, more persistent, memory. Based on these two modules, we introduce a new family of architectures, called Titans, and present three variants to address how one can effectively incorporate memory into this architecture. Our experimental results on language modeling, common-sense reasoning, genomics, and time series tasks show that Titans are more effective than Transformers and recent modern linear recurrent models. They further can effectively scale to larger than 2M context window size with higher accuracy in needle-in-haystack tasks compared to baselines. Authors: Ali Behrouz, Peilin Zhong, Vahab Mirrokni Links: Homepage: https://ykilcher.com Merch: https://ykilcher.com/merch YouTube: https://www.youtube.com/c/yannickilcher Twitter: https://twitter.com/ykilcher Discord: https://ykilcher.com/discord LinkedIn: https://www.linkedin.com/in/ykilcher If you want to support me, the best thing to do is to share out the content :) If you want to suppor

More Videos

13:46

13:46I BUILT A FULLY AUTOMATIC MANSPLAINER

47:02

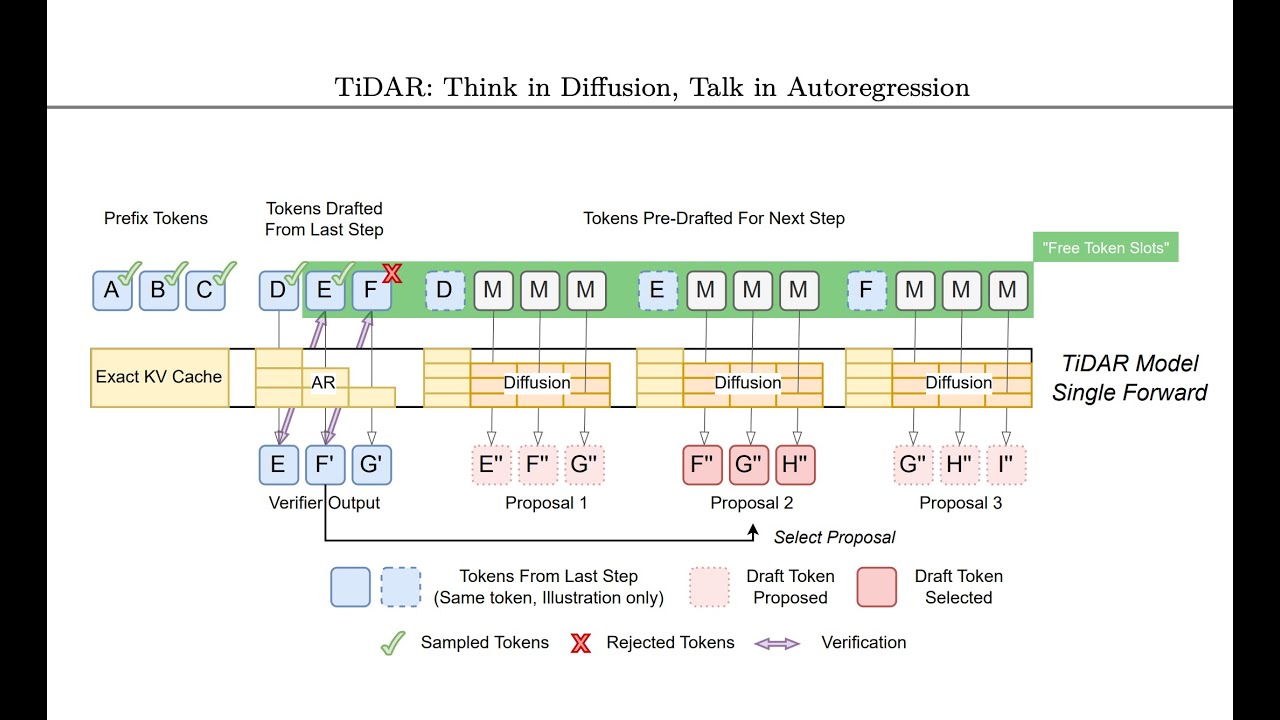

47:02TiDAR: Think in Diffusion, Talk in Autoregression (Paper Analysis)

![[Paper Analysis] The Free Transformer (and some Variational Autoencoder stuff)](https://i.ytimg.com/vi/Nao16-6l6dQ/maxresdefault.jpg) 40:10

40:10[Paper Analysis] The Free Transformer (and some Variational Autoencoder stuff)

![[Video Response] What Cloudflare's code mode misses about MCP and tool calling](https://i.ytimg.com/vi/0bpYCxv2qhw/maxresdefault.jpg) 13:19

13:19[Video Response] What Cloudflare's code mode misses about MCP and tool calling

![[Paper Analysis] On the Theoretical Limitations of Embedding-Based Retrieval (Warning: Rant)](https://i.ytimg.com/vi/zKohTkN0Fyk/maxresdefault.jpg) 48:57

48:57[Paper Analysis] On the Theoretical Limitations of Embedding-Based Retrieval (Warning: Rant)

7:09

7:09