[Paper Analysis] The Free Transformer (and some Variational Autoencoder stuff)

Summary

This video provides a deep dive into a research paper, analyzing an extension of the Transformer model that incorporates variational autoencoder concepts to improve generative processes through unsupervised learning. It is highly valuable for students and educators on an 'AI in Education' platform who are learning advanced machine learning concepts, as it explains cutting-edge AI architectures like Transformers and VAEs.

Description

https://arxiv.org/abs/2510.17558 Abstract: We propose an extension of the decoder Transformer that conditions its generative process on random latent variables which are learned without supervision thanks to a variational procedure. Experimental evaluations show that allowing such a conditioning translates into substantial improvements on downstream tasks. Author: François Fleuret Links: Homepage: https://ykilcher.com Merch: https://ykilcher.com/merch YouTube: https://www.youtube.com/c/yannickilcher Twitter: https://twitter.com/ykilcher Discord: https://ykilcher.com/discord LinkedIn: https://www.linkedin.com/in/ykilcher If you want to support me, the best thing to do is to share out the content :) If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this): SubscribeStar: https://www.subscribestar.com/yannickilcher Patreon: https://www.patreon.com/yannickilcher Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2 Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

More Videos

13:46

13:46I BUILT A FULLY AUTOMATIC MANSPLAINER

47:02

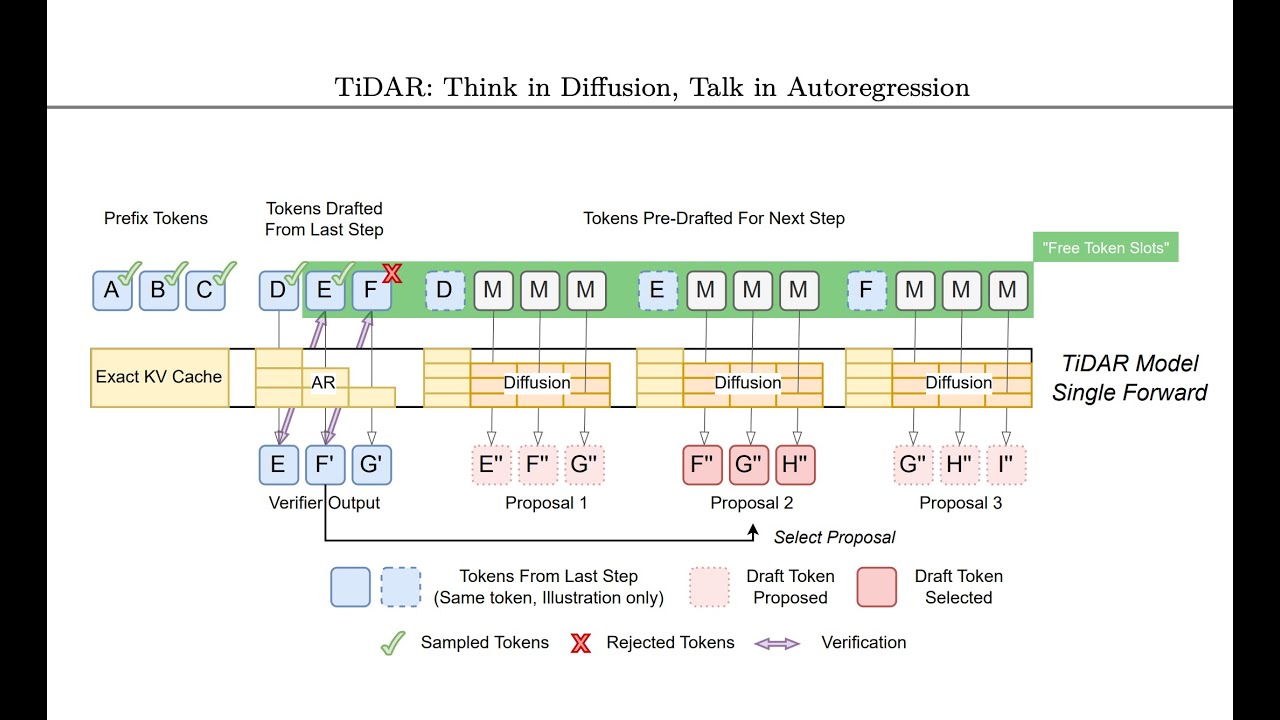

47:02TiDAR: Think in Diffusion, Talk in Autoregression (Paper Analysis)

32:31

32:31Titans: Learning to Memorize at Test Time (Paper Analysis)

![[Video Response] What Cloudflare's code mode misses about MCP and tool calling](https://i.ytimg.com/vi/0bpYCxv2qhw/maxresdefault.jpg) 13:19

13:19[Video Response] What Cloudflare's code mode misses about MCP and tool calling

![[Paper Analysis] On the Theoretical Limitations of Embedding-Based Retrieval (Warning: Rant)](https://i.ytimg.com/vi/zKohTkN0Fyk/maxresdefault.jpg) 48:57

48:57[Paper Analysis] On the Theoretical Limitations of Embedding-Based Retrieval (Warning: Rant)

7:09

7:09